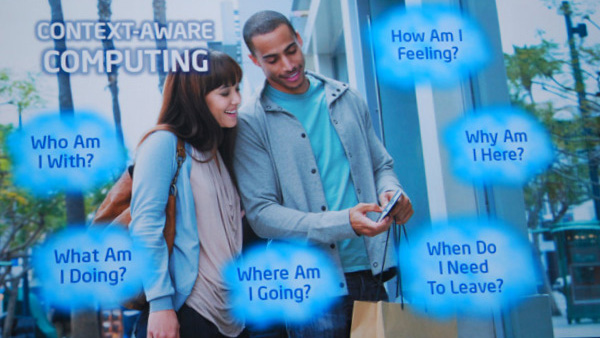

Future Lab: Context Aware

One of the next frontiers of computing is to create systems that understand the user. Context aware devices of the near future might recommend restaurants, monitor a user’s health, or screen phone calls—all based on information collected from device sensors and casual input data. Two of the most profound sensors are already on our devices: microphone and camera. In this episode of Future Lab we talk with researchers who are exploring how to make devices context aware.

Interviewees:

Andrew Campbell, Professor of Computer Science, Dartmouth College

Rosalind Picard, Professor of Media Arts and Sciences, M.I T.

Lama Nachman, Senior Researcher, Intel Labs

Demos:

Mobile Sensing Group at Dartmouth

See also:

Raising the IQ on Smartphones

Posted in:

Audio Podcast, Future Lab Radio, Intel, Intel Free Press, Intel Labs, Research@Intel